Mini Series: Efficient Engineering 101

Developing complex hardware in a fast-growing engineering team is not an easy task. While a small team will grow initially quite organically, all teams hit the point where they for the first time have to get serious about defining processes and tools.

Usually that point is reached, when you have the feeling that you are adding more people to the team but you are not reaching your development goals fast enough and/or not in good enough quality. You might feel that you are losing time in inefficient technical meetings, due to misunderstandings or in re-work that could have been avoided.

At Valispace we have the privilege to have seen the organization and tooling of many engineering teams and we would like to share some insights. Spoiler alert: there is no best way to organize your team, but there are choices you have to make; so we would like to help you by making those choices obvious.

In this mini-series we are going to touch on requirement- vs. test-driven design, milestone reviews vs. agile reviews, document-driven vs. data-driven, KANBAN vs. WBS and some more topics. If you would like us to cover specific topics, feel free to reach out to engineering@valispace.com.

Part 1: Requirement-Driven vs. Test-Driven

You might not get to choose whether your product development comes with customer requirements or not. But you do get to choose whether you follow an approach of using requirements as the internal driving design design tool or whether you want to follow a more iterative approach of constant small improvements, by implementing a test-driven approach.

Requirement-Driven Engineering

Top level mission requirements are broken down systematically into many System- , Subsystem- and Unit-level requirements. At the end of the design/build process, requirements are verified, to ensure the design/products meets the expectations.

Pros

+ Ensures the completeness of all necessary functions is taken into account across all elements of the mission.

+ Allocation of engineering budgets (e.g. how much power is allocated to each subsystem), as well as interface definitions allow for parallel work in different disciplines and clear expectations in supplier management.

+ Provides a clear tracing of design decisions and expectations of which performances need to be met at every level.

Cons

– You can quickly accumulate too many requirements to handle and efforts to test and re-test them on every level become more burdensome than their benefits.

– This might give you the false impression that your system will be working, once every requirement is met. Validation of the whole system cannot be replaced with verification of requirements.

– Requirements are too often times ambiguous, open to interpretation or contradict each other. When the team relies too much on their text without a clear system model, those problems will only be found during the validation phase.

– The common disconnect from the requirement text to the actual design, makes it easy to overlook design flaws, because with too many requirements to keep track of in your head, problems might only become visible when the product is already built.

How to get started?

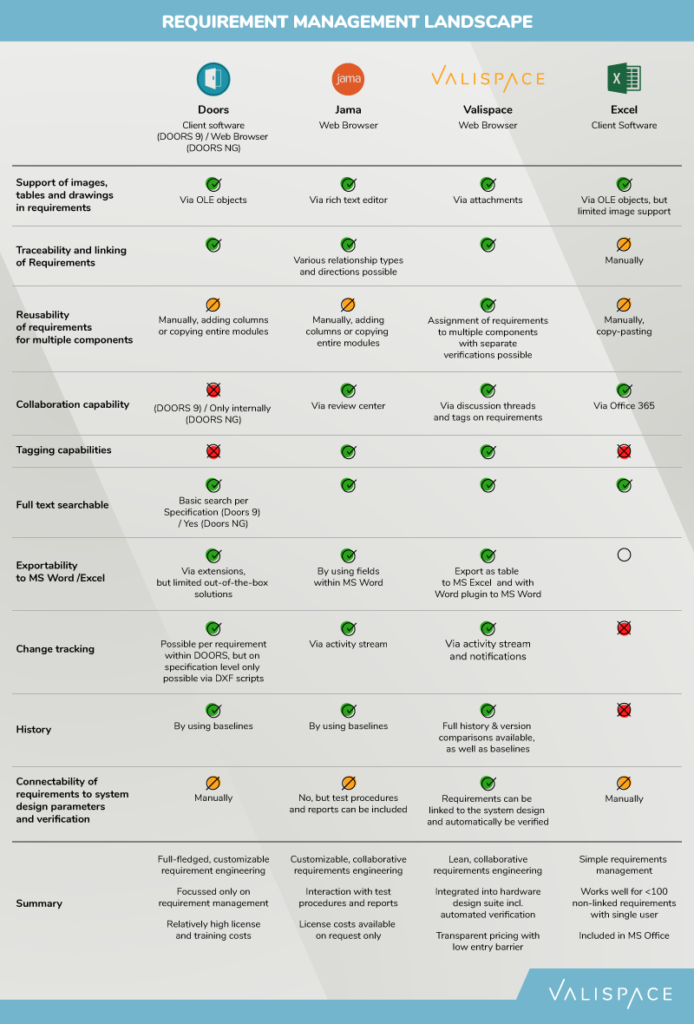

Here is a comparison chart for requirement tools that help you get an overview:

Test-Driven Engineering

Test-Driven engineering is still in its infancy in the hardware development world.

In Test-Driven engineering you design your test bench at a very early stage of the project. Tests are automated as far as possible. Any new design (parts, software, etc.) is integrated as soon as possible to the testbench and all tests are re-run nightly.

Pros

+ A very clear status of the current state of the product at any point in time.

+ De-risks the project, by catching unforeseen mistakes, incompatibilities and problems very early on in the design cycle, which then reduces cost and time for associated re-designs.

+ Forces the team to focus on key technical KPIs, which should be improved in an iterative design process.

Cons

– Not everything that is relevant can be tested daily in an automated fashion: E.g. mechanical and thermal tests might only be possible to execute once every few months; full monte-carlo ADCS simulations with hardware in the loop might take weeks to fully execute with changed parameters instead of available hours every day.

– Some properties might not be detectable at all through tests, but have to be thoroughly analyzed (e.g. system reliability over time). By relying only on the test-driven approach, crucial problems might be overlooked that could have been detected in a thorough analysis.

– Surprises and conflicts between sub-teams may arise when parts that affect other subsystems need to be changed but there are no clear technical interfaces defined between disciplines.

How to get started?

At typical method that is used in test-driven hardware development is to start out with a complete simulation and then slowly replace parts of its models with Hardware in the loop (HIL).

Some tools that can help you with test-driven engineering:

- LABVIEW / SIMULINK – Directly drive hardware in the loop through visual programming language, in which you can replace non-existing hardware easily with models

- PYTHON (and other scripting languages) – quickly write and adapt scripts that accesses hardware, stimulates software, etc. and can write results into data repositories

Conclusion

Many companies use a mix between both approaches to compensate for each of their shortcomings. As a general guideline you could say:

If it is very obvious what you are building and you have experience doing it, then stick more to the test-driven approach, to optimize your product in short iteration cycles. It works best when you are a vertically integrated company, which can quickly react to changes and modify equipment in-house. Make sure to have a process in place to handle non-testable assumptions and how to implement change management when multiple teams are affected.

If your suppliers are developing new equipment for you or your team has not built many products of this new type already, then use requirement engineering. Thinking about the problem in depth and working on the breakdown will already provide lots of insights to validate concepts, without yet having to simulate or built a single piece of equipment. Just don’t rely on that theory only or over-do it. Paper is very patient; so start building something to validate your designs also in reality.

Some companies use heavy requirements engineering in the early phase and only until subsystem level, to arrive at a consistent design and interfaces. Then the theoretical design can easily be tracked against those subsystems; while simulations and hardware tests can be run iteratively to directly verify those requirements every step along the way.